|

|

Public Member Functions | |

| def | __init__ |

| def | __init__ |

| def | buildListOfBadFiles |

| def | buildListOfFiles |

| def | convertTimeToRun |

| def | datasetSnippet |

| def | dataType |

| def | dump_cff |

| def | extractFileSizes |

| def | fileInfoList |

| def | fileList |

| def | forcerunrange |

| def | getForceRunRangeFunction |

| def | getPrimaryDatasetEntries |

| def | magneticField |

| def | magneticFieldForRun |

| def | name |

| def | parentDataset |

| def | predefined |

| def | printInfo |

| def | runList |

Public Member Functions inherited from dataset.BaseDataset Public Member Functions inherited from dataset.BaseDataset | |

| def | __init__ |

| def init(self, name, user, pattern='. More... | |

| def | buildListOfBadFiles |

| def | buildListOfFiles |

| def | extractFileSizes |

| def | getPrimaryDatasetEntries |

| def | listOfFiles |

| def | listOfGoodFiles |

| def | listOfGoodFilesWithPrescale |

| def | printFiles |

| def | printInfo |

Public Attributes | |

| bad_files | |

| castorDir | |

| files | |

| filesAndSizes | |

| good_files | |

| lfnDir | |

| maskExists | |

| report | |

Public Attributes inherited from dataset.BaseDataset Public Attributes inherited from dataset.BaseDataset | |

| bad_files | |

| dbsInstance | |

| MM. More... | |

| files | |

| filesAndSizes | |

| good_files | |

| name | |

| pattern | |

| primaryDatasetEntries | |

| MM. More... | |

| report | |

| run_range | |

| user | |

Static Private Attributes | |

| tuple | __dummy_source_template |

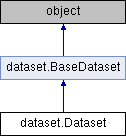

Definition at line 14 of file dataset.py.

| def dataset.Dataset.__init__ | ( | self, | |

| datasetName, | |||

dasLimit = 0, |

|||

tryPredefinedFirst = True, |

|||

cmssw = os.environ["CMSSW_BASE"], |

|||

cmsswrelease = os.environ["CMSSW_RELEASE_BASE"] |

|||

| ) |

Definition at line 16 of file dataset.py.

Referenced by dataset.Dataset.__init__().

| def dataset.Dataset.__init__ | ( | self, | |

| name, | |||

| user, | |||

pattern = '.*root' |

|||

| ) |

Definition at line 264 of file dataset.py.

References dataset.Dataset.__init__().

|

private |

Yield successive n-sized chunks from theList.

Definition at line 79 of file dataset.py.

Referenced by dataset.Dataset.__createSnippet().

|

private |

Definition at line 117 of file dataset.py.

References dataset.Dataset.__chunks(), dataset.Dataset.__dummy_source_template, dataset.Dataset.__findInJson(), dataset.Dataset.__firstusedrun, dataset.Dataset.__getRunList(), dataset.Dataset.__lastusedrun, dataset.Dataset.convertTimeToRun(), dataset.Dataset.fileList(), dataset.Dataset.getForceRunRangeFunction(), join(), list(), bookConverter.max, min(), dataset.Dataset.predefined(), python.rootplot.root2matplotlib.replace(), and split.

Referenced by dataset.Dataset.datasetSnippet(), and dataset.Dataset.dump_cff().

|

private |

Definition at line 617 of file dataset.py.

References dataset.Dataset.convertTimeToRun().

Referenced by dataset.Dataset.convertTimeToRun().

|

private |

Definition at line 608 of file dataset.py.

Referenced by dataset.Dataset.convertTimeToRun().

|

private |

|

private |

|

private |

Definition at line 287 of file dataset.py.

References dataset.Dataset.__findInJson().

Referenced by dataset.Dataset.__createSnippet(), dataset.Dataset.__findInJson(), dataset.Dataset.__getData(), dataset.Dataset.__getDataType(), dataset.Dataset.__getFileInfoList(), dataset.Dataset.__getMagneticField(), dataset.Dataset.__getMagneticFieldForRun(), dataset.Dataset.__getParentDataset(), dataset.Dataset.__getRunList(), dataset.Dataset.convertTimeToRun(), and dataset.Dataset.fileList().

|

private |

Definition at line 339 of file dataset.py.

References dataset.Dataset.__findInJson().

Referenced by dataset.Dataset.__getDataType(), dataset.Dataset.__getFileInfoList(), dataset.Dataset.__getMagneticField(), dataset.Dataset.__getMagneticFieldForRun(), dataset.Dataset.__getParentDataset(), dataset.Dataset.__getRunList(), and dataset.Dataset.convertTimeToRun().

|

private |

Definition at line 369 of file dataset.py.

References dataset.Dataset.__filename, dataset.Dataset.__findInJson(), dataset.Dataset.__getData(), dataset.Dataset.__name, dataset.Dataset.__predefined, ElectronMVAID.ElectronMVAID.name, counter.Counter.name, entry.name, average.Average.name, histograms.Histograms.name, cond::persistency::TAG::NAME.name, TmModule.name, cond::persistency::GLOBAL_TAG::NAME.name, core.autovars.NTupleVariable.name, cond::persistency::TAG::TIME_TYPE.name, genericValidation.GenericValidation.name, cond::persistency::GLOBAL_TAG::VALIDITY.name, cond::persistency::COND_LOG_TABLE::EXECTIME.name, cond::persistency::TAG::OBJECT_TYPE.name, preexistingValidation.PreexistingValidation.name, cond::persistency::GLOBAL_TAG::DESCRIPTION.name, ora::RecordSpecImpl::Item.name, cond::persistency::COND_LOG_TABLE::IOVTAG.name, cond::persistency::TAG::SYNCHRONIZATION.name, cond::persistency::GLOBAL_TAG::RELEASE.name, cond::persistency::COND_LOG_TABLE::USERTEXT.name, cond::persistency::TAG::END_OF_VALIDITY.name, cond::persistency::GLOBAL_TAG::SNAPSHOT_TIME.name, cond::persistency::GLOBAL_TAG::INSERTION_TIME.name, cond::persistency::TAG::DESCRIPTION.name, cond::persistency::GTEditorData.name, cond::persistency::TAG::LAST_VALIDATED_TIME.name, FWTGeoRecoGeometry::Info.name, Types._Untracked.name, cond::persistency::TAG::INSERTION_TIME.name, dataset.BaseDataset.name, cond::persistency::TAG::MODIFICATION_TIME.name, personalPlayback.Applet.name, ParameterSet.name, PixelDCSObject< class >::Item.name, analyzer.Analyzer.name, DQMRivetClient::LumiOption.name, MagCylinder.name, alignment.Alignment.name, ParSet.name, DQMRivetClient::ScaleFactorOption.name, SingleObjectCondition.name, EgHLTOfflineSummaryClient::SumHistBinData.name, XMLHTRZeroSuppressionLoader::_loaderBaseConfig.name, DQMGenericClient::EfficOption.name, XMLRBXPedestalsLoader::_loaderBaseConfig.name, cond::persistency::GTProxyData.name, core.autovars.NTupleObjectType.name, MyWatcher.name, edm::PathTimingSummary.name, cond::TimeTypeSpecs.name, lumi::TriggerInfo.name, edm::PathSummary.name, cond::persistency::GLOBAL_TAG_MAP::GLOBAL_TAG_NAME.name, PixelEndcapLinkMaker::Item.name, perftools::EdmEventSize::BranchRecord.name, FWTableViewManager::TableEntry.name, cond::persistency::GLOBAL_TAG_MAP::RECORD.name, PixelBarrelLinkMaker::Item.name, Mapper::definition< ScannerT >.name, EcalLogicID.name, cond::persistency::GLOBAL_TAG_MAP::LABEL.name, cond::persistency::GLOBAL_TAG_MAP::TAG_NAME.name, ExpressionHisto< T >.name, McSelector.name, RecoSelector.name, XMLProcessor::_loaderBaseConfig.name, DQMGenericClient::ProfileOption.name, cond::persistency::PAYLOAD::HASH.name, TreeCrawler.Package.name, cond::persistency::PAYLOAD::OBJECT_TYPE.name, cond::persistency::PAYLOAD::DATA.name, cond::persistency::PAYLOAD::STREAMER_INFO.name, cond::persistency::PAYLOAD::VERSION.name, MagGeoBuilderFromDDD::volumeHandle.name, cond::persistency::PAYLOAD::INSERTION_TIME.name, DQMGenericClient::NormOption.name, options.ConnectionHLTMenu.name, DQMGenericClient::CDOption.name, FastHFShowerLibrary.name, h4DSegm.name, PhysicsTools::Calibration::Variable.name, cond::TagInfo_t.name, EDMtoMEConverter.name, looper.Looper.name, MEtoEDM< T >::MEtoEDMObject.name, cond::persistency::IOV::TAG_NAME.name, TrackerSectorStruct.name, cond::persistency::IOV::SINCE.name, cond::persistency::IOV::PAYLOAD_HASH.name, cond::persistency::IOV::INSERTION_TIME.name, MuonGeometrySanityCheckPoint.name, config.Analyzer.name, config.Service.name, h2DSegm.name, options.HLTProcessOptions.name, core.autovars.NTupleSubObject.name, DQMNet::WaitObject.name, AlpgenParameterName.name, SiStripMonitorDigi.name, core.autovars.NTupleObject.name, cond::persistency::TAG_MIGRATION::SOURCE_ACCOUNT.name, cond::persistency::TAG_MIGRATION::SOURCE_TAG.name, cond::persistency::TAG_MIGRATION::TAG_NAME.name, cond::persistency::TAG_MIGRATION::STATUS_CODE.name, cond::persistency::TAG_MIGRATION::INSERTION_TIME.name, core.autovars.NTupleCollection.name, FastTimerService::LuminosityDescription.name, cond::persistency::PAYLOAD_MIGRATION::SOURCE_ACCOUNT.name, cond::persistency::PAYLOAD_MIGRATION::SOURCE_TOKEN.name, cond::persistency::PAYLOAD_MIGRATION::PAYLOAD_HASH.name, cond::persistency::PAYLOAD_MIGRATION::INSERTION_TIME.name, conddblib.Tag.name, conddblib.GlobalTag.name, personalPlayback.FrameworkJob.name, plotscripts.SawTeethFunction.name, FastTimerService::ProcessDescription.name, hTMaxCell.name, cscdqm::ParHistoDef.name, BeautifulSoup.Tag.name, TiXmlAttribute.name, BeautifulSoup.SoupStrainer.name, and python.rootplot.root2matplotlib.replace().

Referenced by dataset.Dataset.dataType().

Definition at line 529 of file dataset.py.

References dataset.Dataset.__fileInfoList, dataset.Dataset.__findInJson(), dataset.Dataset.__getData(), dataset.Dataset.__name, dataset.Dataset.__parentFileInfoList, dataset.Dataset.__predefined, ElectronMVAID.ElectronMVAID.name, counter.Counter.name, entry.name, average.Average.name, histograms.Histograms.name, cond::persistency::TAG::NAME.name, TmModule.name, cond::persistency::GLOBAL_TAG::NAME.name, core.autovars.NTupleVariable.name, cond::persistency::TAG::TIME_TYPE.name, genericValidation.GenericValidation.name, cond::persistency::GLOBAL_TAG::VALIDITY.name, cond::persistency::COND_LOG_TABLE::EXECTIME.name, cond::persistency::TAG::OBJECT_TYPE.name, preexistingValidation.PreexistingValidation.name, cond::persistency::GLOBAL_TAG::DESCRIPTION.name, ora::RecordSpecImpl::Item.name, cond::persistency::COND_LOG_TABLE::IOVTAG.name, cond::persistency::TAG::SYNCHRONIZATION.name, cond::persistency::GLOBAL_TAG::RELEASE.name, cond::persistency::GLOBAL_TAG::SNAPSHOT_TIME.name, cond::persistency::COND_LOG_TABLE::USERTEXT.name, cond::persistency::TAG::END_OF_VALIDITY.name, cond::persistency::GLOBAL_TAG::INSERTION_TIME.name, cond::persistency::TAG::DESCRIPTION.name, cond::persistency::GTEditorData.name, cond::persistency::TAG::LAST_VALIDATED_TIME.name, FWTGeoRecoGeometry::Info.name, Types._Untracked.name, cond::persistency::TAG::INSERTION_TIME.name, dataset.BaseDataset.name, cond::persistency::TAG::MODIFICATION_TIME.name, personalPlayback.Applet.name, ParameterSet.name, PixelDCSObject< class >::Item.name, analyzer.Analyzer.name, DQMRivetClient::LumiOption.name, MagCylinder.name, alignment.Alignment.name, ParSet.name, DQMRivetClient::ScaleFactorOption.name, SingleObjectCondition.name, EgHLTOfflineSummaryClient::SumHistBinData.name, XMLHTRZeroSuppressionLoader::_loaderBaseConfig.name, DQMGenericClient::EfficOption.name, XMLRBXPedestalsLoader::_loaderBaseConfig.name, cond::persistency::GTProxyData.name, core.autovars.NTupleObjectType.name, MyWatcher.name, edm::PathTimingSummary.name, lumi::TriggerInfo.name, cond::TimeTypeSpecs.name, edm::PathSummary.name, cond::persistency::GLOBAL_TAG_MAP::GLOBAL_TAG_NAME.name, perftools::EdmEventSize::BranchRecord.name, PixelEndcapLinkMaker::Item.name, FWTableViewManager::TableEntry.name, cond::persistency::GLOBAL_TAG_MAP::RECORD.name, PixelBarrelLinkMaker::Item.name, Mapper::definition< ScannerT >.name, EcalLogicID.name, cond::persistency::GLOBAL_TAG_MAP::LABEL.name, cond::persistency::GLOBAL_TAG_MAP::TAG_NAME.name, ExpressionHisto< T >.name, McSelector.name, RecoSelector.name, XMLProcessor::_loaderBaseConfig.name, cond::persistency::PAYLOAD::HASH.name, TreeCrawler.Package.name, DQMGenericClient::ProfileOption.name, cond::persistency::PAYLOAD::OBJECT_TYPE.name, cond::persistency::PAYLOAD::DATA.name, cond::persistency::PAYLOAD::STREAMER_INFO.name, cond::persistency::PAYLOAD::VERSION.name, MagGeoBuilderFromDDD::volumeHandle.name, cond::persistency::PAYLOAD::INSERTION_TIME.name, DQMGenericClient::NormOption.name, options.ConnectionHLTMenu.name, DQMGenericClient::CDOption.name, FastHFShowerLibrary.name, h4DSegm.name, PhysicsTools::Calibration::Variable.name, EDMtoMEConverter.name, cond::TagInfo_t.name, looper.Looper.name, MEtoEDM< T >::MEtoEDMObject.name, cond::persistency::IOV::TAG_NAME.name, cond::persistency::IOV::SINCE.name, TrackerSectorStruct.name, cond::persistency::IOV::PAYLOAD_HASH.name, cond::persistency::IOV::INSERTION_TIME.name, MuonGeometrySanityCheckPoint.name, config.Analyzer.name, config.Service.name, h2DSegm.name, options.HLTProcessOptions.name, core.autovars.NTupleSubObject.name, DQMNet::WaitObject.name, AlpgenParameterName.name, SiStripMonitorDigi.name, core.autovars.NTupleObject.name, cond::persistency::TAG_MIGRATION::SOURCE_ACCOUNT.name, cond::persistency::TAG_MIGRATION::SOURCE_TAG.name, cond::persistency::TAG_MIGRATION::TAG_NAME.name, cond::persistency::TAG_MIGRATION::STATUS_CODE.name, cond::persistency::TAG_MIGRATION::INSERTION_TIME.name, core.autovars.NTupleCollection.name, FastTimerService::LuminosityDescription.name, cond::persistency::PAYLOAD_MIGRATION::SOURCE_ACCOUNT.name, cond::persistency::PAYLOAD_MIGRATION::SOURCE_TOKEN.name, cond::persistency::PAYLOAD_MIGRATION::PAYLOAD_HASH.name, cond::persistency::PAYLOAD_MIGRATION::INSERTION_TIME.name, conddblib.Tag.name, conddblib.GlobalTag.name, personalPlayback.FrameworkJob.name, plotscripts.SawTeethFunction.name, FastTimerService::ProcessDescription.name, hTMaxCell.name, cscdqm::ParHistoDef.name, BeautifulSoup.Tag.name, TiXmlAttribute.name, BeautifulSoup.SoupStrainer.name, and dataset.Dataset.parentDataset().

Referenced by dataset.Dataset.fileInfoList().

|

private |

Definition at line 404 of file dataset.py.

References dataset.Dataset.__cmssw, dataset.Dataset.__cmsswrelease, dataset.Dataset.__dataType, dataset.Dataset.__filename, dataset.Dataset.__findInJson(), dataset.Dataset.__getData(), dataset.Dataset.__name, dataset.Dataset.__predefined, and python.rootplot.root2matplotlib.replace().

Referenced by dataset.Dataset.magneticField().

|

private |

For MC, this returns the same as the previous function. For data, it gets the magnetic field from the runs. This is important for deciding which template to use for offlinevalidation

Definition at line 475 of file dataset.py.

References dataset.Dataset.__dataType, dataset.Dataset.__filename, dataset.Dataset.__findInJson(), dataset.Dataset.__firstusedrun, dataset.Dataset.__getData(), dataset.Dataset.__getMagneticFieldForRun(), dataset.Dataset.__lastusedrun, dataset.Dataset.__magneticField, dataset.Dataset.__name, dataset.Dataset.__predefined, funct.abs(), python.rootplot.root2matplotlib.replace(), and split.

Referenced by dataset.Dataset.__getMagneticFieldForRun(), dataset.Dataset.dump_cff(), and dataset.Dataset.magneticFieldForRun().

|

private |

Definition at line 394 of file dataset.py.

References dataset.Dataset.__findInJson(), dataset.Dataset.__getData(), and dataset.Dataset.__name.

Referenced by dataset.Dataset.parentDataset().

|

private |

Definition at line 595 of file dataset.py.

References dataset.Dataset.__findInJson(), dataset.Dataset.__getData(), dataset.Dataset.__name, and dataset.Dataset.__runList.

Referenced by dataset.Dataset.__createSnippet(), dataset.Dataset.convertTimeToRun(), and dataset.Dataset.runList().

| def dataset.Dataset.buildListOfBadFiles | ( | self | ) |

fills the list of bad files from the IntegrityCheck log. When the integrity check file is not available, files are considered as good.

Definition at line 275 of file dataset.py.

| def dataset.Dataset.buildListOfFiles | ( | self, | |

pattern = '.*root' |

|||

| ) |

fills list of files, taking all root files matching the pattern in the castor dir

Definition at line 271 of file dataset.py.

| def dataset.Dataset.convertTimeToRun | ( | self, | |

begin = None, |

|||

end = None, |

|||

firstRun = None, |

|||

lastRun = None, |

|||

shortTuple = True |

|||

| ) |

Definition at line 622 of file dataset.py.

References dataset.Dataset.__dateString(), dataset.Dataset.__datetime(), dataset.Dataset.__find_ge(), dataset.Dataset.__find_lt(), dataset.Dataset.__findInJson(), dataset.Dataset.__getData(), dataset.Dataset.__getRunList(), and dataset.Dataset.__name.

Referenced by dataset.Dataset.__createSnippet(), and dataset.Dataset.__dateString().

| def dataset.Dataset.datasetSnippet | ( | self, | |

jsonPath = None, |

|||

begin = None, |

|||

end = None, |

|||

firstRun = None, |

|||

lastRun = None, |

|||

crab = False, |

|||

parent = False |

|||

| ) |

Definition at line 706 of file dataset.py.

References dataset.Dataset.__createSnippet(), dataset.Dataset.__filename, dataset.Dataset.__name, dataset.Dataset.__official, dataset.Dataset.__origName, dataset.Dataset.__predefined, and dataset.Dataset.dump_cff().

Referenced by dataset.Dataset.parentDataset().

| def dataset.Dataset.dataType | ( | self | ) |

Definition at line 687 of file dataset.py.

References dataset.Dataset.__dataType, and dataset.Dataset.__getDataType().

| def dataset.Dataset.dump_cff | ( | self, | |

outName = None, |

|||

jsonPath = None, |

|||

begin = None, |

|||

end = None, |

|||

firstRun = None, |

|||

lastRun = None, |

|||

parent = False |

|||

| ) |

Definition at line 759 of file dataset.py.

References dataset.Dataset.__alreadyStored, dataset.Dataset.__cmssw, dataset.Dataset.__createSnippet(), dataset.Dataset.__dataType, dataset.Dataset.__getMagneticFieldForRun(), dataset.Dataset.__magneticField, dataset.Dataset.__name, python.rootplot.root2matplotlib.replace(), and split.

Referenced by dataset.Dataset.datasetSnippet().

| def dataset.Dataset.extractFileSizes | ( | self | ) |

Get the file size for each file, from the eos ls -l command.

Definition at line 306 of file dataset.py.

References dataset.EOSDataset.castorDir, and dataset.Dataset.castorDir.

Definition at line 838 of file dataset.py.

References dataset.Dataset.__dasLimit, and dataset.Dataset.__getFileInfoList().

Referenced by dataset.Dataset.fileList().

Definition at line 823 of file dataset.py.

References dataset.Dataset.__fileList, dataset.Dataset.__findInJson(), dataset.Dataset.__parentFileList, and dataset.Dataset.fileInfoList().

Referenced by dataset.Dataset.__createSnippet().

| def dataset.Dataset.forcerunrange | ( | self, | |

| firstRun, | |||

| lastRun, | |||

| s | |||

| ) |

s must be in the format run1:lum1-run2:lum2

Definition at line 309 of file dataset.py.

References dataset.Dataset.__firstusedrun, dataset.Dataset.__lastusedrun, and split.

Referenced by dataset.Dataset.getForceRunRangeFunction().

| def dataset.Dataset.getForceRunRangeFunction | ( | self, | |

| firstRun, | |||

| lastRun | |||

| ) |

Definition at line 334 of file dataset.py.

References dataset.Dataset.forcerunrange().

Referenced by dataset.Dataset.__createSnippet().

| def dataset.Dataset.getPrimaryDatasetEntries | ( | self | ) |

Definition at line 326 of file dataset.py.

References runall.testit.report, dataset.BaseDataset.report, ALIUtils.report, and WorkFlowRunner.WorkFlowRunner.report.

| def dataset.Dataset.magneticField | ( | self | ) |

Definition at line 692 of file dataset.py.

References dataset.Dataset.__getMagneticField(), and dataset.Dataset.__magneticField.

| def dataset.Dataset.magneticFieldForRun | ( | self, | |

run = -1 |

|||

| ) |

Definition at line 697 of file dataset.py.

References dataset.Dataset.__getMagneticFieldForRun().

| def dataset.Dataset.name | ( | self | ) |

Definition at line 841 of file dataset.py.

References dataset.Dataset.__name.

Referenced by cuy.divideElement.__init__(), cuy.plotElement.__init__(), cuy.additionElement.__init__(), cuy.superimposeElement.__init__(), cuy.graphElement.__init__(), config.CFG.__str__(), validation.Sample.digest(), VIDSelectorBase.VIDSelectorBase.initialize(), and Vispa.Views.PropertyView.Property.valueChanged().

| def dataset.Dataset.parentDataset | ( | self | ) |

Definition at line 700 of file dataset.py.

References dataset.Dataset.__getParentDataset(), dataset.Dataset.__parentDataset, and dataset.Dataset.datasetSnippet().

Referenced by dataset.Dataset.__getFileInfoList().

| def dataset.Dataset.predefined | ( | self | ) |

Definition at line 844 of file dataset.py.

References dataset.Dataset.__predefined.

Referenced by dataset.Dataset.__createSnippet().

| def dataset.Dataset.printInfo | ( | self | ) |

Definition at line 321 of file dataset.py.

References dataset.EOSDataset.castorDir, dataset.Dataset.castorDir, dataset.Dataset.lfnDir, ElectronMVAID.ElectronMVAID.name, counter.Counter.name, average.Average.name, entry.name, histograms.Histograms.name, TmModule.name, cond::persistency::GLOBAL_TAG::NAME.name, core.autovars.NTupleVariable.name, cond::persistency::TAG::NAME.name, cond::persistency::TAG::TIME_TYPE.name, cond::persistency::GLOBAL_TAG::VALIDITY.name, genericValidation.GenericValidation.name, cond::persistency::TAG::OBJECT_TYPE.name, cond::persistency::GLOBAL_TAG::DESCRIPTION.name, preexistingValidation.PreexistingValidation.name, cond::persistency::COND_LOG_TABLE::EXECTIME.name, cond::persistency::TAG::SYNCHRONIZATION.name, cond::persistency::GLOBAL_TAG::RELEASE.name, ora::RecordSpecImpl::Item.name, cond::persistency::COND_LOG_TABLE::IOVTAG.name, cond::persistency::COND_LOG_TABLE::USERTEXT.name, cond::persistency::TAG::END_OF_VALIDITY.name, cond::persistency::GLOBAL_TAG::SNAPSHOT_TIME.name, cond::persistency::GTEditorData.name, cond::persistency::TAG::DESCRIPTION.name, cond::persistency::GLOBAL_TAG::INSERTION_TIME.name, cond::persistency::TAG::LAST_VALIDATED_TIME.name, FWTGeoRecoGeometry::Info.name, Types._Untracked.name, cond::persistency::TAG::INSERTION_TIME.name, cond::persistency::TAG::MODIFICATION_TIME.name, dataset.BaseDataset.name, personalPlayback.Applet.name, ParameterSet.name, PixelDCSObject< class >::Item.name, analyzer.Analyzer.name, DQMRivetClient::LumiOption.name, MagCylinder.name, alignment.Alignment.name, ParSet.name, DQMRivetClient::ScaleFactorOption.name, SingleObjectCondition.name, EgHLTOfflineSummaryClient::SumHistBinData.name, DQMGenericClient::EfficOption.name, cond::persistency::GTProxyData.name, core.autovars.NTupleObjectType.name, XMLHTRZeroSuppressionLoader::_loaderBaseConfig.name, XMLRBXPedestalsLoader::_loaderBaseConfig.name, MyWatcher.name, edm::PathTimingSummary.name, cond::TimeTypeSpecs.name, lumi::TriggerInfo.name, edm::PathSummary.name, PixelEndcapLinkMaker::Item.name, perftools::EdmEventSize::BranchRecord.name, cond::persistency::GLOBAL_TAG_MAP::GLOBAL_TAG_NAME.name, FWTableViewManager::TableEntry.name, cond::persistency::GLOBAL_TAG_MAP::RECORD.name, PixelBarrelLinkMaker::Item.name, EcalLogicID.name, Mapper::definition< ScannerT >.name, cond::persistency::GLOBAL_TAG_MAP::LABEL.name, ExpressionHisto< T >.name, McSelector.name, cond::persistency::GLOBAL_TAG_MAP::TAG_NAME.name, XMLProcessor::_loaderBaseConfig.name, RecoSelector.name, DQMGenericClient::ProfileOption.name, cond::persistency::PAYLOAD::HASH.name, TreeCrawler.Package.name, cond::persistency::PAYLOAD::OBJECT_TYPE.name, cond::persistency::PAYLOAD::DATA.name, cond::persistency::PAYLOAD::STREAMER_INFO.name, MagGeoBuilderFromDDD::volumeHandle.name, cond::persistency::PAYLOAD::VERSION.name, cond::persistency::PAYLOAD::INSERTION_TIME.name, DQMGenericClient::NormOption.name, options.ConnectionHLTMenu.name, DQMGenericClient::CDOption.name, FastHFShowerLibrary.name, h4DSegm.name, PhysicsTools::Calibration::Variable.name, EDMtoMEConverter.name, cond::TagInfo_t.name, looper.Looper.name, MEtoEDM< T >::MEtoEDMObject.name, cond::persistency::IOV::TAG_NAME.name, TrackerSectorStruct.name, cond::persistency::IOV::SINCE.name, cond::persistency::IOV::PAYLOAD_HASH.name, cond::persistency::IOV::INSERTION_TIME.name, MuonGeometrySanityCheckPoint.name, config.Analyzer.name, config.Service.name, h2DSegm.name, options.HLTProcessOptions.name, core.autovars.NTupleSubObject.name, DQMNet::WaitObject.name, AlpgenParameterName.name, SiStripMonitorDigi.name, core.autovars.NTupleObject.name, cond::persistency::TAG_MIGRATION::SOURCE_ACCOUNT.name, cond::persistency::TAG_MIGRATION::SOURCE_TAG.name, cond::persistency::TAG_MIGRATION::TAG_NAME.name, cond::persistency::TAG_MIGRATION::STATUS_CODE.name, cond::persistency::TAG_MIGRATION::INSERTION_TIME.name, core.autovars.NTupleCollection.name, FastTimerService::LuminosityDescription.name, cond::persistency::PAYLOAD_MIGRATION::SOURCE_ACCOUNT.name, cond::persistency::PAYLOAD_MIGRATION::SOURCE_TOKEN.name, cond::persistency::PAYLOAD_MIGRATION::PAYLOAD_HASH.name, cond::persistency::PAYLOAD_MIGRATION::INSERTION_TIME.name, conddblib.Tag.name, conddblib.GlobalTag.name, personalPlayback.FrameworkJob.name, plotscripts.SawTeethFunction.name, FastTimerService::ProcessDescription.name, hTMaxCell.name, cscdqm::ParHistoDef.name, BeautifulSoup.Tag.name, TiXmlAttribute.name, and BeautifulSoup.SoupStrainer.name.

| def dataset.Dataset.runList | ( | self | ) |

Definition at line 847 of file dataset.py.

References dataset.Dataset.__getRunList(), and dataset.Dataset.__runList.

|

private |

Definition at line 23 of file dataset.py.

Referenced by dataset.Dataset.dump_cff().

|

private |

Definition at line 24 of file dataset.py.

Referenced by dataset.Dataset.__getMagneticField(), and dataset.Dataset.dump_cff().

|

private |

Definition at line 25 of file dataset.py.

Referenced by dataset.Dataset.__getMagneticField().

|

private |

Definition at line 19 of file dataset.py.

Referenced by dataset.Dataset.fileInfoList().

|

private |

Definition at line 76 of file dataset.py.

Referenced by dataset.Dataset.__getMagneticField(), dataset.Dataset.__getMagneticFieldForRun(), dataset.Dataset.dataType(), and dataset.Dataset.dump_cff().

|

staticprivate |

Definition at line 103 of file dataset.py.

Referenced by dataset.Dataset.__createSnippet().

|

private |

Definition at line 21 of file dataset.py.

Referenced by dataset.Dataset.__getFileInfoList().

|

private |

Definition at line 20 of file dataset.py.

Referenced by dataset.Dataset.fileList().

|

private |

Definition at line 53 of file dataset.py.

Referenced by dataset.Dataset.__getDataType(), dataset.Dataset.__getMagneticField(), dataset.Dataset.__getMagneticFieldForRun(), dataset.Dataset.datasetSnippet(), csvReporter.csvReporter.writeRow(), and csvReporter.csvReporter.writeRows().

|

private |

Definition at line 26 of file dataset.py.

Referenced by dataset.Dataset.__createSnippet(), dataset.Dataset.__getMagneticFieldForRun(), and dataset.Dataset.forcerunrange().

|

private |

Definition at line 27 of file dataset.py.

Referenced by dataset.Dataset.__createSnippet(), dataset.Dataset.__getMagneticFieldForRun(), and dataset.Dataset.forcerunrange().

|

private |

Definition at line 77 of file dataset.py.

Referenced by dataset.Dataset.__getMagneticFieldForRun(), dataset.Dataset.dump_cff(), and dataset.Dataset.magneticField().

|

private |

Definition at line 17 of file dataset.py.

Referenced by dataset.Dataset.__getDataType(), dataset.Dataset.__getFileInfoList(), dataset.Dataset.__getMagneticField(), dataset.Dataset.__getMagneticFieldForRun(), dataset.Dataset.__getParentDataset(), dataset.Dataset.__getRunList(), dataset.Dataset.convertTimeToRun(), dataset.Dataset.datasetSnippet(), dataset.Dataset.dump_cff(), Config.Process.dumpConfig(), Config.Process.dumpPython(), dataset.Dataset.name(), and Config.Process.name_().

|

private |

Definition at line 34 of file dataset.py.

Referenced by dataset.Dataset.datasetSnippet().

|

private |

Definition at line 18 of file dataset.py.

Referenced by dataset.Dataset.datasetSnippet().

|

private |

Definition at line 28 of file dataset.py.

Referenced by dataset.Dataset.parentDataset().

|

private |

Definition at line 30 of file dataset.py.

Referenced by dataset.Dataset.__getFileInfoList().

|

private |

Definition at line 29 of file dataset.py.

Referenced by dataset.Dataset.fileList().

|

private |

Definition at line 50 of file dataset.py.

Referenced by dataset.Dataset.__getDataType(), dataset.Dataset.__getFileInfoList(), dataset.Dataset.__getMagneticField(), dataset.Dataset.__getMagneticFieldForRun(), dataset.Dataset.datasetSnippet(), and dataset.Dataset.predefined().

|

private |

Definition at line 22 of file dataset.py.

Referenced by dataset.Dataset.__getRunList(), and dataset.Dataset.runList().

| dataset.Dataset.bad_files |

Definition at line 282 of file dataset.py.

| dataset.Dataset.castorDir |

Definition at line 266 of file dataset.py.

Referenced by dataset.Dataset.extractFileSizes(), and dataset.Dataset.printInfo().

| dataset.Dataset.files |

Definition at line 273 of file dataset.py.

| dataset.Dataset.filesAndSizes |

Definition at line 311 of file dataset.py.

| dataset.Dataset.good_files |

Definition at line 283 of file dataset.py.

| dataset.Dataset.lfnDir |

Definition at line 265 of file dataset.py.

Referenced by dataset.Dataset.printInfo().

| dataset.Dataset.maskExists |

Definition at line 267 of file dataset.py.

| dataset.Dataset.report |

Definition at line 268 of file dataset.py.

Referenced by addOnTests.testit.run().

1.8.5

1.8.5